How-To Set Up a Jupyter Notebook on GCP with Granular Access Control to Read from Big Query, Configured with Terraform

A Different Approach to using Service Accounts

I'm a developer from Vancouver, BC who's had an interesting journey in tech starting from support, through cloud infrastructure and project management. Currently I work as an SRE at lightstep helping build and "operationalize" things that helps to guide others towards better o11y :)

Context

In our organization we have data-analysts that need to fetch data from different sources to perform their work. In the last couple of weeks, I worked with a Data Analyst that needed a solution to query data from BigQuery (BQ) datasets using R (a programming language for stastical computing).

What’s BigQuery? An enterprise data warehouse that is specific to Google Cloud Platform (GCP). Useful in circumstances where folks want to run analysis on their data but don’t want to do it on the databases themselves (Most databases are set up to ensure data is read/written reliably - whereas data warehouses are built specifically for analytical operations).

One common pattern is to fill BQ datasets from production databases and run analytical operations on those datasets.

Photo by Akinori UEMURA on Unsplash

Photo by Akinori UEMURA on Unsplash

Constraints

In building / researching a solution for this need, we wanted to work around a couple of constraints:

- Future-proofing: The solution should be able to keep up with future demands and reduce the dependency on local hardware.

- We don’t want analyst laptops to be a limiting factor wherever possible. Using the cloud means leveraging the ability to spin up workloads on hardware with specific requirements as needed.

- Running this in the cloud also means that a user can continue working / access data from anywhere / any laptop (even if they lose access to their device).

- Security - Authentication: The solution must be locked down to specific users.

- The Bigquery Datasets are already subject to security policies that lock down their access. However, as this is another / new mechanism making use of the dataset - ensure that this solution at minimum, does not grant access to any new / unwanted users. Ideally - only grants access to those who require it (and are already within the security / access policy).

- This solution should also allow the ability to query into datasets in other GCP projects that we maintain.

- Security - Authorization: The solution must only allow access to specific DataSets.

- In line with above, even if the requestors are within the security policy to read and write from this dataset, the solution must be locked down to READONLY operations on the data. This is to ensure that another risk of data loss is mitigated where possible.

- Infrastructure-As-Code: The solution should be Terraformed

- No special snowflakes* on our watch! Having this infrastructure terraformed has a lot of benefits, which you can read about here. But for us, means that everything that’s running is codified and can be examined, modified, nuked from one source of truth.

- Cost: Using this notebook shouldn’t be prohibitively expensive.

Goal

Photo by ÁLVARO MENDOZA on Unsplash

Photo by ÁLVARO MENDOZA on Unsplash

Implement a solution that allows a data-analyst to run R code against BigQuery Datasets which meets all of these constraints.

Solution

The majority of this solution is already covered in a GCP article: Data Science with R on GCP EDA - however what this post includes is an approach that builds on Service Account IAM to meet our security requirements, and shows how to achieve this solution with Terraform

- The main character of this solution is the Vertex AI service which allows you to run Jupyter Notebooks (as an IDE for R) on rapidly configurable VM’s. (Futureproofing ✅)

- It’s usually used in AI related workflows like training ML (Machine Learning) models and thus in doing so - has native support for talking to BigQuery.

- These workbooks have access to the

Deep Learningfamily of images which allows you to quickly instantiate notebooks with specific images (including one with the R framework installed!) - These workbooks run on top of regular VM’s that can be configured to specific workload needs (i.e. tweaking processor, memory and disk specs).

- Depending on the hardware used, the costs are minimal (Cost ✅).

- For example, using an

e2-mediuminstance is only $24.46 per month at time of writing. (and that’s with the assumption that the notebook is running 24/7)

- For example, using an

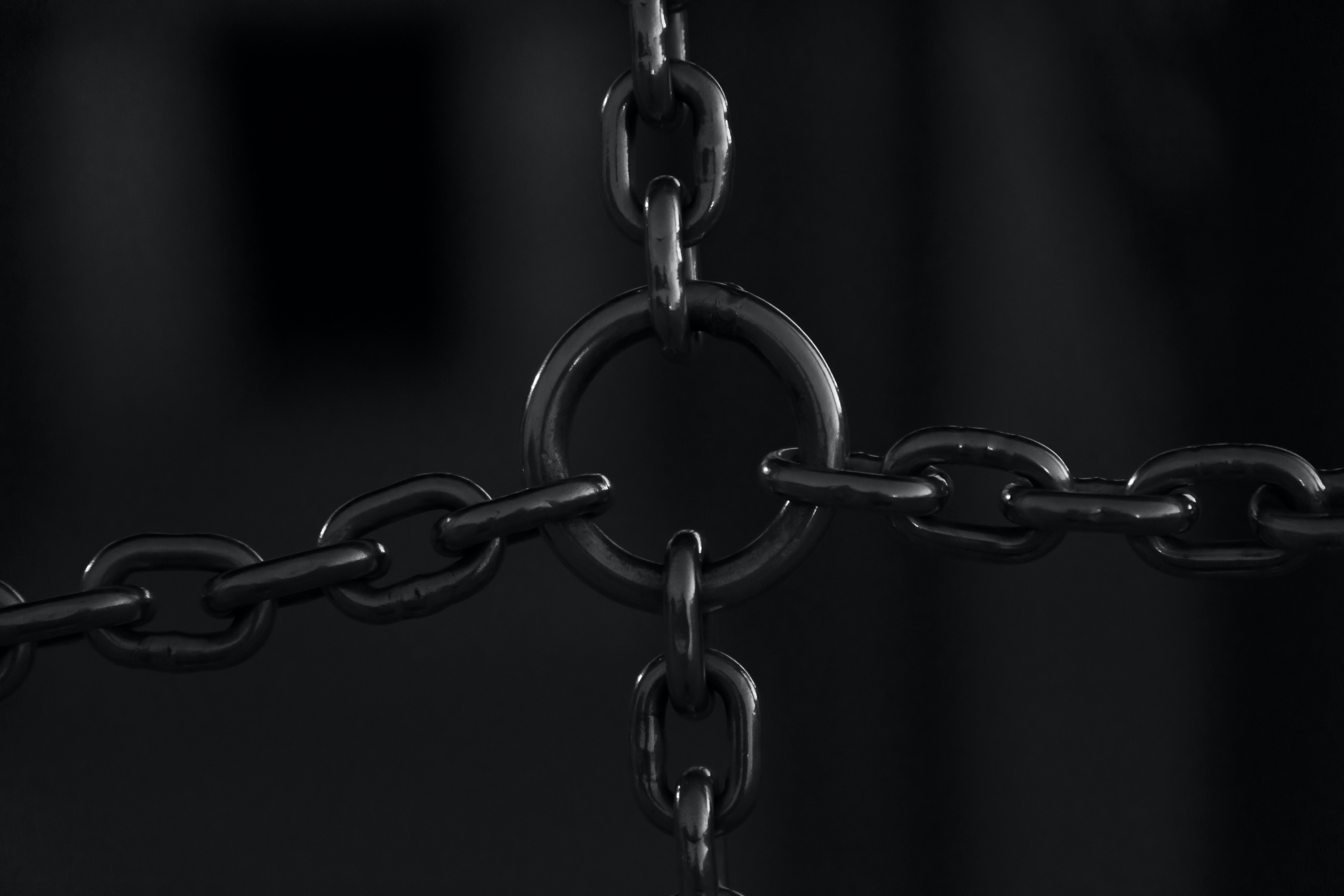

- The constraints for our solution are met by the following:

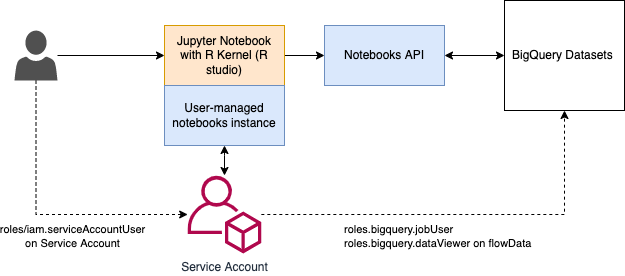

- 🔑🔑🔑 The Vertex AI User Managed Notebook Instance (hereafter referred to as the “notebook”) can be tied to a Service Account (SA) 🔑🔑🔑. By applying access control to this SA we can achieve the constraints as follows:

- Security - Authorization:

- We can lock down who has access to this notebook by gating on who gets to have the

roles/iam.serviceAccountUserrole on the Service Account in GCP IAM. - We can lock down that SA’s access to (1) only the datasets required and (2) READ ONLY operations by assigning the following roles with constraints:

roles.bigquery.jobUser(on the whole project)roles.bigquery.dataVieweron the specific datasets.

- This also allows querying datasets in other GCP projects, by granting roles in thoes projects to this SA.

- This is the major key, as the Service Account can scale up to multiple users by being able to bind a the

roles/iam.serviceAccountUserto any principal which can include users AND groups.

- We can lock down who has access to this notebook by gating on who gets to have the

- Security - Authentication: ✅ **Because our users log in using their Google accounts, the authentication mechanism is taken care of by GCP (using folks’ credentials).

- Security - Authorization:

- 🔑🔑🔑 The Vertex AI User Managed Notebook Instance (hereafter referred to as the “notebook”) can be tied to a Service Account (SA) 🔑🔑🔑. By applying access control to this SA we can achieve the constraints as follows:

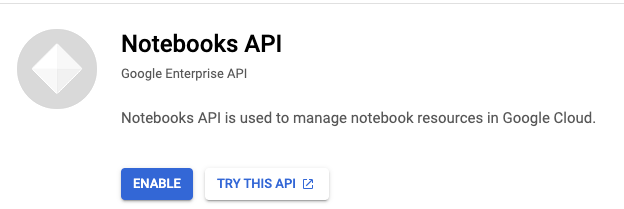

- In order for the Notebook to query BigQuery, the Notebook API must be enabeld

- This is a MANUAL operation that must be done in the GCP console.

- This can all be Terraformed. (Infrastructure as code ✅)

Implementation

1. Enable the Notebooks API

https://console.cloud.google.com/marketplace/product/google/notebooks.googleapis.com

2. Apply the Terraform

locals {

# CHANGEME

project_name = "tutorial-344120" # The project

}

# Note this requires running a gcloud auth application-default login

provider "google" {

project = locals.project_name

}

##1. Create a Service Account

resource "google_service_account" "analyst_notebook" {

account_id = "analyst-notebook"

display_name = "SA for analysts to access BQ datasets via Vertex notebook"

}

##2. Create a User Managed Notebook that uses that Service Account

resource "google_notebooks_instance" "analyst_notebook" {

name = "analyst-rstudio-notebook"

location = "us-west1-a"

#CHANGEME

machine_type = "e2-medium"

vm_image {

project = "deeplearning-platform-release"

image_family = "r-latest-cpu-experimental"

}

service_account = google_service_account.analyst_notebook.email

}

##3A Allow ability to run BQ jobs on all datasets in project

resource "google_project_iam_member" "project" {

project = locals.project_name #CHANGEME if the target datasets are in diff project.

role = "roles/bigquery.jobUser"

member = "serviceAccount:${google_service_account.analyst_notebook.email}"

}

##3B Allow ability to READ on a SPECIFIC BQ dataset.

resource "google_bigquery_dataset_iam_member" "analyst_notebook_data_viewer" {

project = locals.project_name #CHANGEME, if the target datasets are in diff project.

dataset_id = "rick_morty"

role = "roles/bigquery.dataViewer"

member = "serviceAccount:${google_service_account.analyst_notebook.email}"

}

##4. Allow only the intended user to use the SA and by extension, the notebook

resource "google_service_account_iam_binding" "analyst_notebook_service_account_binding-iam" {

service_account_id = google_service_account.analyst_notebook.name

role = "roles/iam.serviceAccountUser"

members = [

#CHANGEME - who should have access to assume the Service Account (and access the Notebook)

"user:thilina.ratnayake@email.com",

]

}

Test

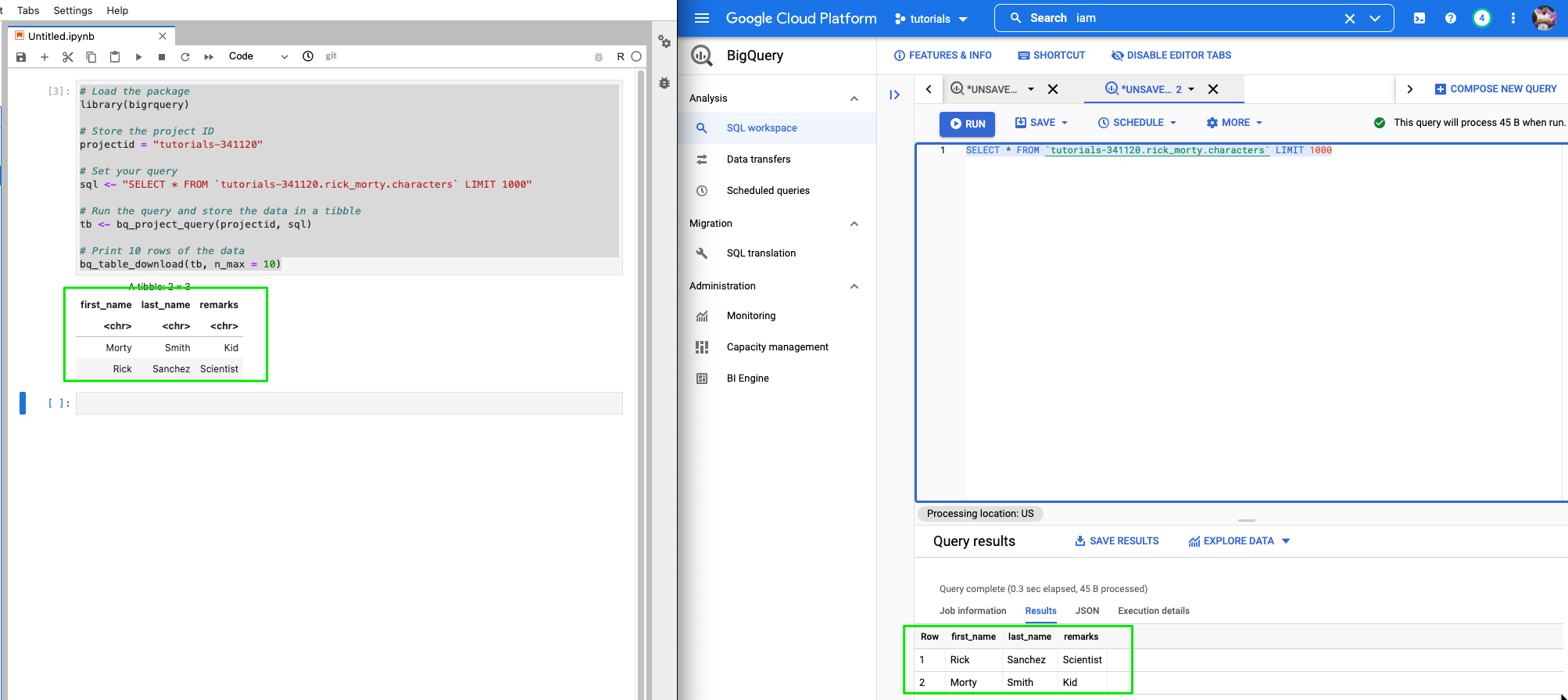

1. Can we open the notebook and query the BigQuery dataset using R?

The R code to query a BQ dataset can be found here: Use R with BigQuery

Yes 🕺

2. Can anyone else log attempt to open up the Jupyter notebook?

Nope! 🔒 ✅

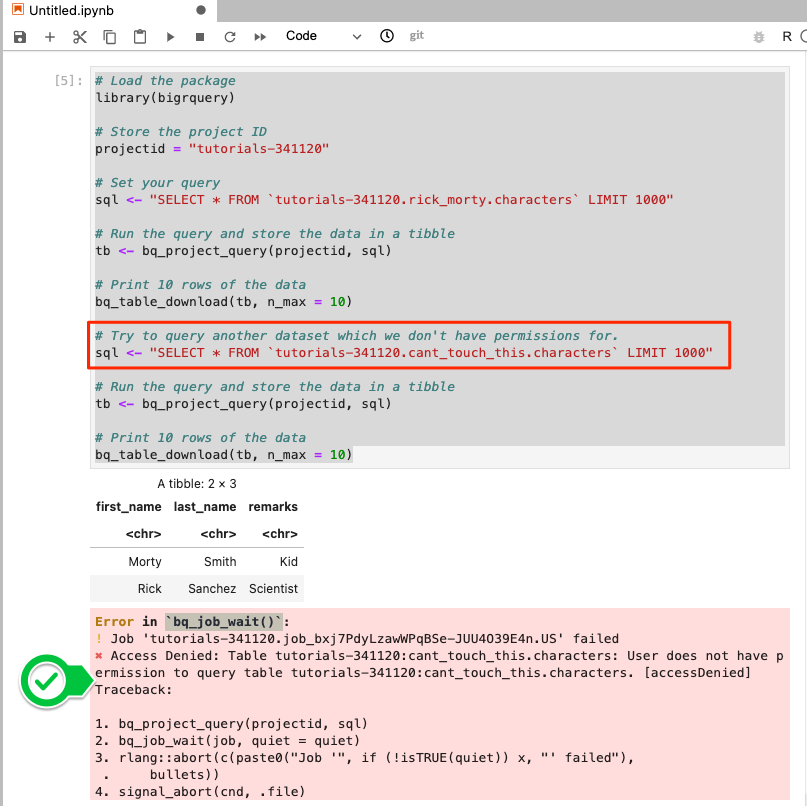

3. Can we attempt to access other datasets? (Outside of what is specified in the IAM policy?)

Also Nope!🔒 ✅

Why not use a Service Account?

Create a Service Account, let the user download the SA key and use it when connecting to the database from their device.

We stayed away from Service Account keys primarily for the number of risks that they add to the security story.

You can read more about those risks here: https://cloud.google.com/iam/docs/best-practices-for-securing-service-accounts

Using a Service Acount key with local device also means losing out on a couple of features;

- No infrastructure as code

- Hardware is a constraint - lack of spec / loss is a risk.

Photo by Marliese Streefland on Unsplash

Photo by Marliese Streefland on Unsplash

Conclusion

Service Accounts can be great. They are a good approach if you need to represent non-human users or persistent access to a system.

Service Account keys...not so much. They are gross, and icky, and very easy to lose to become a security risk.

When advantageous, use cloud resources to fill the needs of your users as they bring a couple of benefits:

- Existing auth mechanisms

- Ease of configuration

- Infrastructure-as-code

In this case, we combine both and use a Service Account specifically because of it's ability to be a single target to apply our security policies to.

The key feature that enabled use to this solution was GCP’s ability to tie a User Managed Notebook Instance to a Service Account which we could then apply our access policies onto.